Android privacy: is it a joke or the punchline?

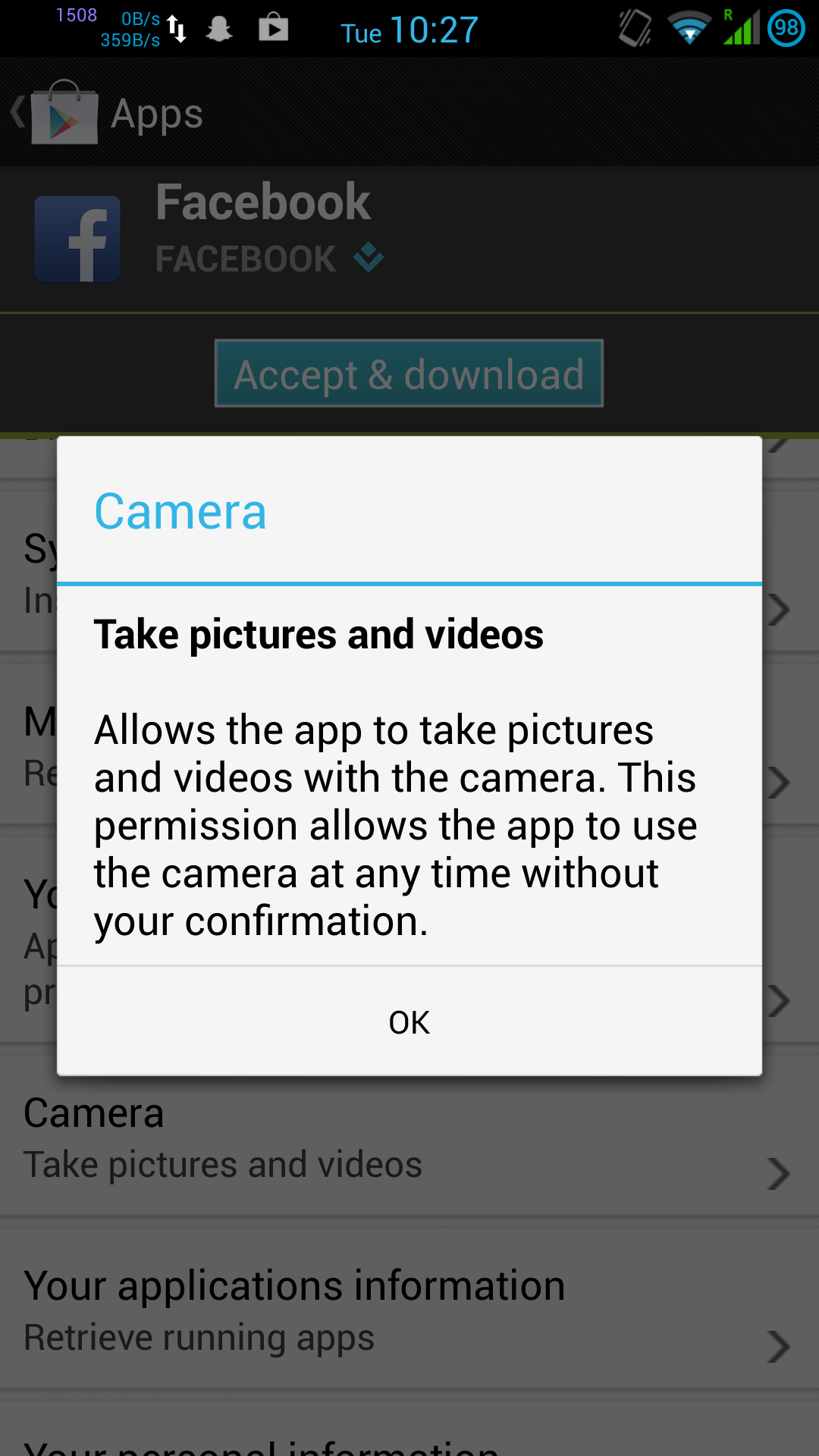

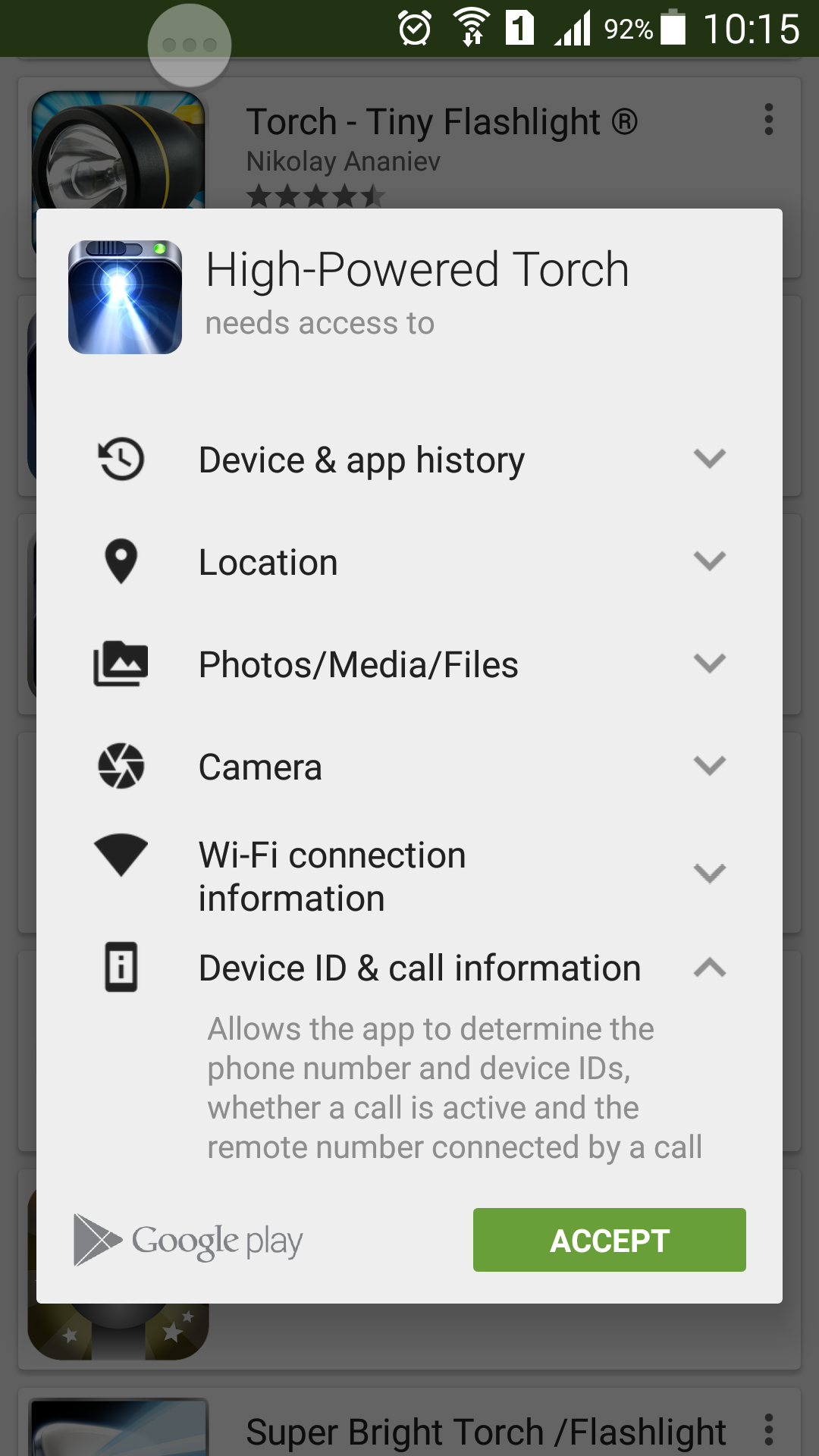

All technical Android users are aware of the problem. You want to install a simple app, such as a Torch, but you’re greeted with this:

This torch app “only” requires access to your personal files, phone calls, location and identity… and of course the actual camera flash itself, which seems like more of an afterthought.

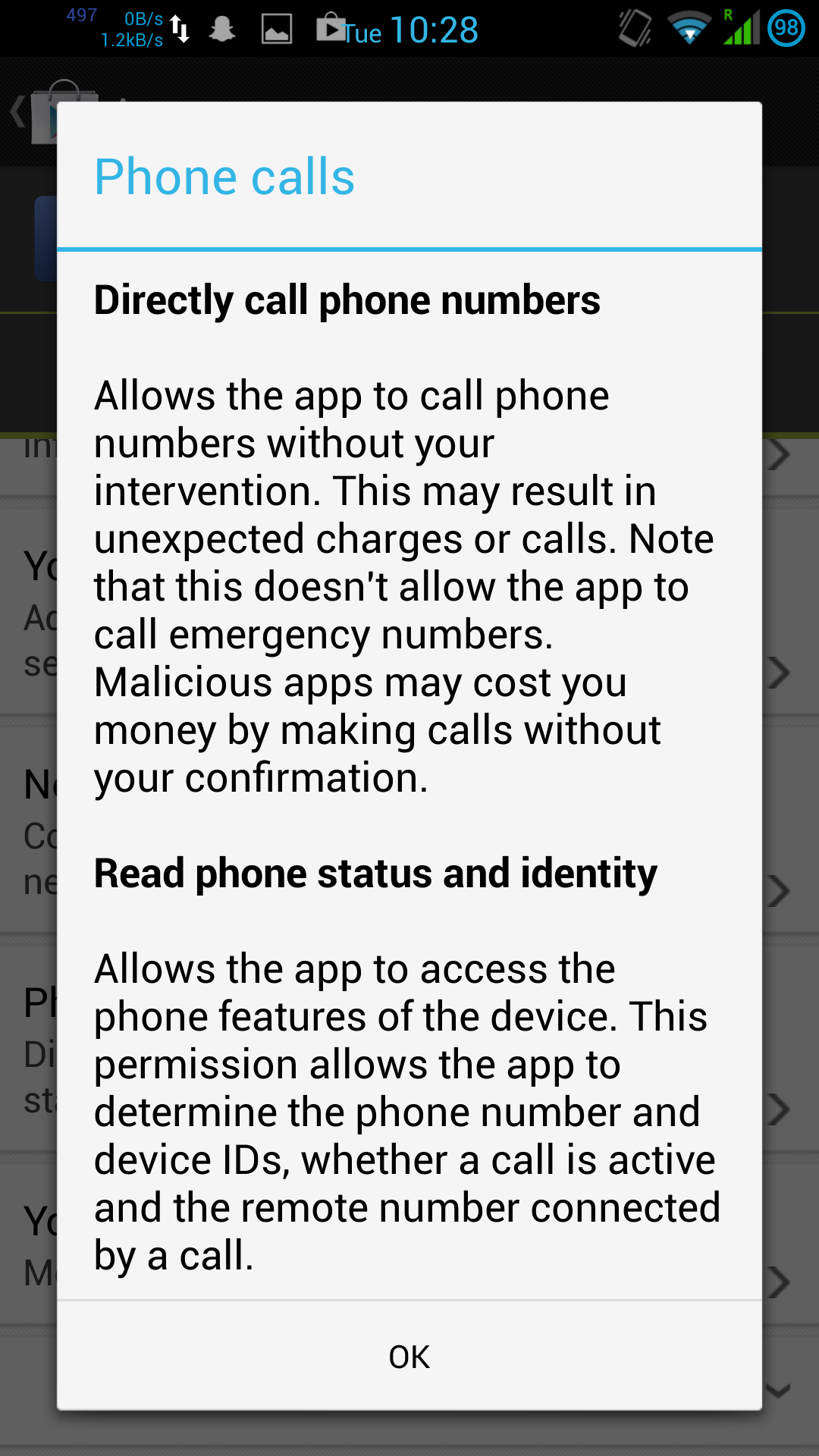

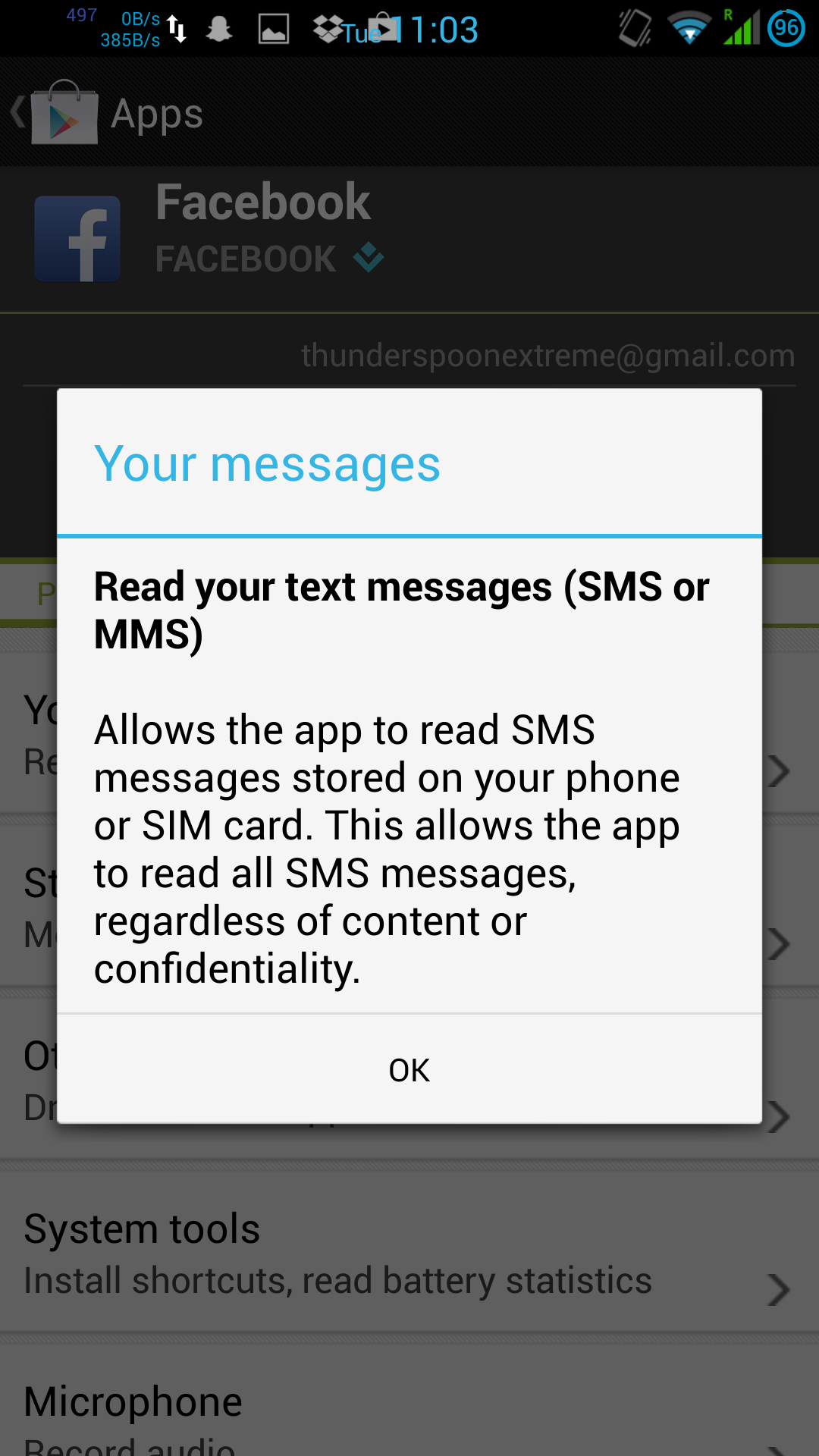

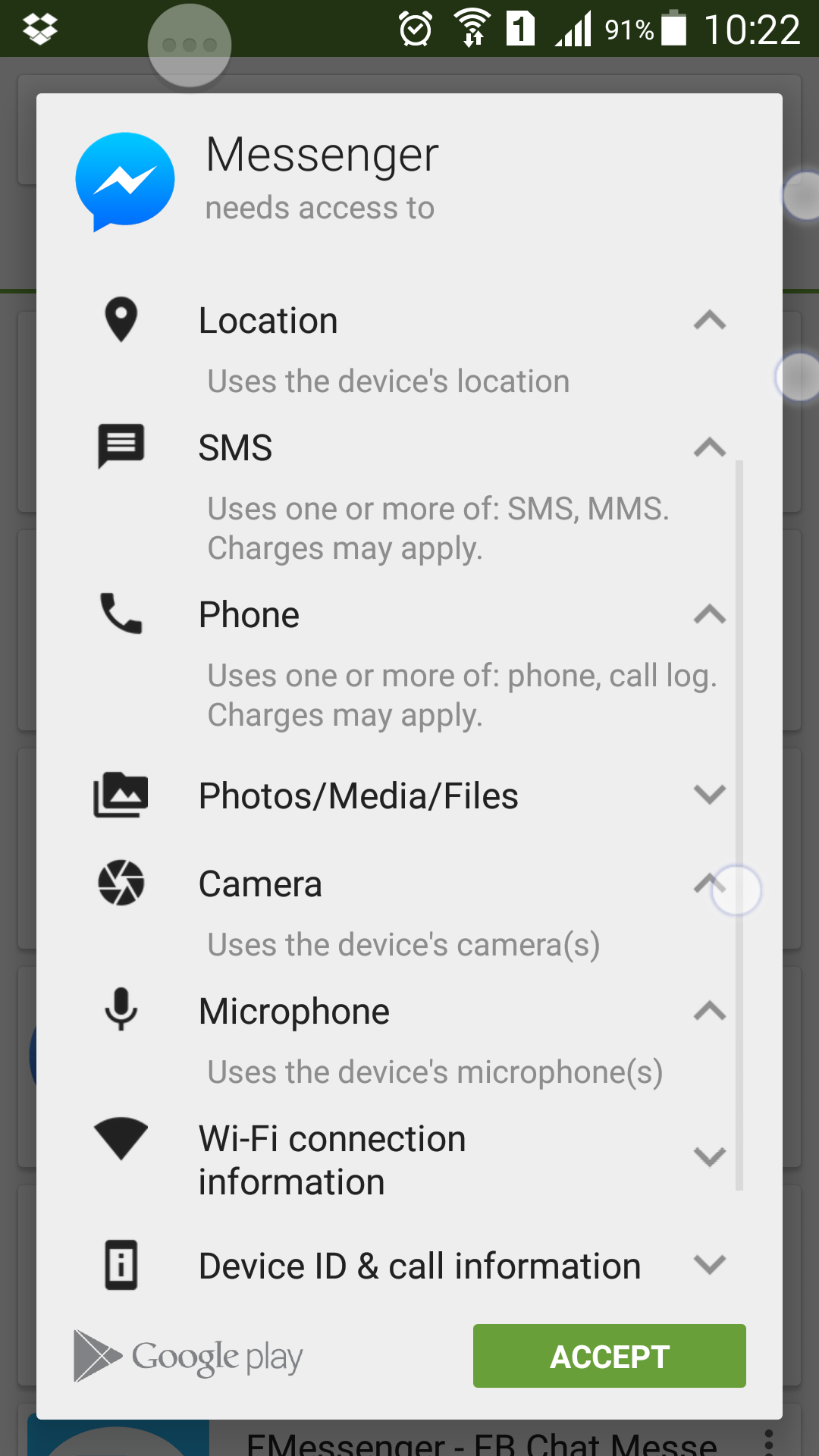

Likewise, the common Facebook Messenger app is also rather greedy with permissions:

Actually, there’s a little more to it than that. An update to the Google Play Store makes app permissions more “user friendly” by hiding the extent that the application wants to access your personal data. On an older version of Play Store, the permissions for Facebook and Messenger read more like this:

Google have actively tried to obfuscate the extent to which they allow their own and 3rd party software to violate privacy of Android users. I use the Facebook apps as an example here, but there are plenty of other widely-used invasive apps around (Google’s own in particular).

How does this extreme cyberstalking survive

This survives the same way that Apple products do, given their terms and conditions… Users either don’t notice the invasive terms that they’re agreeing to, or they don’t realise the consequences of them.

Even if you don’t install these malicious apps, your communications are insecure. If any of the people who you communicate with have a spyware-laden app, it can see both sides of the conversation between you and that person. Android/iOS privacy violation operates in a similar way as herd-immunity, but for the worse instead of for the better.

So what can be done about it

By Google? Nothing, it’s their business model after all:

- Mine as much personal information as possible from anyone and everyone

- ???

- PROFIT!!

* ??? = target advertisements

By free open-source mods and ROMs (e.g. Cyanogen)? Plenty.

The Xposed module “App Settings” allows one to revoke permissions from an app, often causing it to crash when it attempts to use that permission.

This is an “opt-in” security measure in the sense that one must actively revoke the permission after installing the app. As an anecdote, when revoking Facebook Messenger’s ability to monitor the screen contents, I noticed that Messenger would crash (“Forced Close”) whenever I opened a WhatsApp conversation. This was before Facebook bought WhatsApp of course.

But the problem is that security and privacy are still on an opt-in per-app basis.

Inversion of control: Power to the user

By default, our system allows malicious spyware and trojans to act as soon as we hit “install”, then we must manually disable them afterwards. Disabling them may also cause the app to crash frequently, making the part of it that’s actually useful now inaccessible. What we need is an inversion of control – instead of the permissions being “All or nothing” on installation and “Access all areas unless I stop you” afterwards, we need to give full control back to the user.

Ideally the user decides what permissions they’re happy with any app being able to use, and therefore which permissions are “private”. When an app attempts to conduct a “private” activity, then it receives mock data rather than an exception – so instead of crashing the app, it simply receives false information. If we decide that an app should be able to access some “private” area, then we manually grant it permission to get the “real” data instead of mock data – now private information is “opt-in” instead of “opt-out”, and the security is “opt-out” instead. We could take this further by allowing a timeout on the private information “opt-in”, so we could allow Facebook access to our location information for the next 30 minutes, and have it automatically disable afterwards.

So if “location” is amongst our list of “private” permissions, then when Facebook requests our location it gets mock data – we could be atop K2 for all Facebook knows (although IP geolocation would eliminate this somewhat…). When it requests access to our phone calls or SMS messages, it gets fake data. If it requires access to messages to validate our number or device, then we can grant it access to new messages only and only for the next 5 minutes, then have it automatically receive mock data again after that.

Flow diagrams: Current privacy model vs. proposed inversion of control

Click to open enlarged in new window